Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

This section contains docs and information resources that guide you through the key DevOps concepts and its role in managing the DIGIT platform.

All content on this page by eGov Foundation is licensed under a Creative Commons Attribution 4.0 International License.

Download the file below to view the DIGIT Rollout Program Governance structure and process details.

All content on this page by is licensed under a .

An overview of the prerequisites to setup DIGIT and some of the key capabilities to understand before provisioning the infra and deploy DIGIT.

DIGIT is the largest urban governance platform built for billions and billions of transactions between citizens and the state govt through various municipal services/integration. The platform is built with key capabilities like scale, speed, integration, configurable, customizable, extendable, multi-tenanted, security, etc. Here, we shall discuss the key requirements and capabilities.

Before proceeding to set up DIGIT, it is essential to know some of the key technical details about DIGIT, like architecture, tech stack and how it is packaged and deployed on various infrastructures. Some of these details are explained in the previous sections. Below are some of the key capabilities to know about DIGIT as a platform.

DIGIT is a collection of various services built as RESTFul APIs with OpenAPI standard

DIGIT is built as MSA (Microservices Architecture)

DIGIT services are packaged as containers and deployed as docker Images.

DIGIT is deployed on Kubernetes which abstracts any Cloud/Infra suitable and standardised for DIGIT deployment.

DIGIT deployment, configuration and customization are done through Helm Charts.

Kubernetes cluster setup is done through code like terraform/ansible suitably.

The OpenAPI Specification (OAS) defines a standard, programming language-agnostic interface description for REST APIs, which allows both humans and computers to discover and understand the capabilities of a service without requiring access to source code, additional documentation, or inspection of network traffic. When properly defined via OpenAPI, a consumer can understand and interact with the remote service with a minimal amount of implementation logic. Similar to what interface descriptions have done for lower-level programming, the OpenAPI Specification removes the guesswork in calling a service.

Microservices are nothing but breaking big beasts into smaller units that can independently be developed, enhanced and scaled as a categorized and layered stack that gives better control over each component of an application that exists in its own container, independently managed and updated. This means that developers can build applications from multiple components and program each component in the language best suited to its function, rather than having to choose a single less-than-ideal language to use for everything. Optimizing software all the way down to the components of the application helps you increase the quality of your products. No time and resources are wasted managing the effects of updating one application on another.

Comparatively the best infra choice for running a microservices application architecture is application containers. Containers encapsulate a lightweight runtime environment for the application, presenting a consistent environment that can follow the application from the developer's desktop to testing to final production deployment, and you can run containers on cloud infra with physical or virtual machines.

As most modern software developers can attest, containers have provided us with dramatically more flexibility for running cloud-native applications on physical and virtual infrastructure. Kubernetes allows you to deploy cloud-native applications anywhere and manage them exactly as you like everywhere. For more details refer to the above link that explains various advantages of Kubernetes.

Kubernetes, the popular container orchestration system, is used extensively. However, it can become complex: you have to handle all of the objects (ConfigMaps, pods, etc.), and would also have to manage the releases. Both can be accomplished with Kubernetes Helm. It is a Kubernetes package manager designed to easily package, configure, and deploy applications and services onto Kubernetes clusters in a standard way, this helps the ecosystem to adopt the standard way of deployment and customization.

For being successful in the DIGIT Setup, below are certain requirements that need to be ascertained:

Scheduled Job Handling

API Gateway

Container Management

Resource and Storage Handling

Fault Tolerance

Load Balancing

Distributed Metrics

Application Runtime and Packaging

App Deployment

Configuration Management

Service Discovery

CI / CD

Virtualization

Hardware & Storage

OS & Networking

SSL Configuration

Infra-as-code

Dockers

DNS Configuration

GitOps

SecOps

On-premise/private cloud accounts

Interface to access and provision required infra

In the case of SDC, NIC or private DC, it'll be VPN to an allocated VLAN

SSH access to the VMs/machines

Infra Skills

Public cloud

Managed Kubernetes services like AKS or EKS or GKE on Azure, AWS and GCP respectively

Private Clouds (SDC, NIC)

Clouds like VMware, OpenStack, Nutanix and more, may or may not have Kubernetes as a managed service. If yes we may have to estimate only the worker nodes depending on the number of ULBs and DIGIT's municipal services that you opt.

In the absence of the above, you have to provision the Kubernetes cluster from the plain VMs as per the general Kubernetes setup instruction and add worker nodes.

Operations Skills

Understanding of Linux, containers, VM Instances, Load Balancers, Security Groups/Firewalls, Nginx, DB Instance, Data Volumes

Experience with Kubernetes, Docker, Jenkins, Helm, Infra-as-code, Terraform

Experience in DevOps/SRE practice on microservices and modern infrastructure

Provisioning the Kubernetes Cluster in any of the

Private State datacenter (SDC) or

National Cloud (NIC)

Setting up the persistent disk volumes to attach to DIGIT backbone stateful containers like

ZooKeeper

Kafka

Elastic Search

Setting up the Postgres DB

On a public cloud, provision a Postgres RDS instance.

Private cloud, provision a Postgres DB on a VM with the backup, HA/DRS

Preparing deployment configuration for required DIGIT services using Helm templates from the InfraOps like the following

Preparing DIGIT service helm templates to deploy on the Kubernetes cluster

K8s Secrets

K8s ConfigMaps

Environment variables of each microservices

Deploy the stable released version of DIGIT and the required services

Setting up Jenkins job to build, bake images and deploy the components for the rolling updates

This document aims to put together all the items which will enable us to come up with a proper training plan for a partner team who will be working on the eDCR service used for the plan scrutiny.

Below listed are the technical skillsets that are required to work on eDCR service. It is expected that the team planning on attending training is well versed with the mentioned technologies before they attend eGov training sessions.

Java and REST APIS

Postgres

Maven

Spring framework

Basics of 2D CAD Drawings

Git

Postman

YAML/JSON

Strong working knowledge of Linux, command, VM Instances, networking, storage

The session, cache, and tokens handling (Redis-server)

Understanding of VM types, Linux OS types, LoadBalancer, VPC, Subnets, Security Groups, Firewall, Routing, DNS

Experience setting up CI like Jenkins and creating pipelines

Artifactory - Nexus, verdaccio, DockerHub, etc

Experience in setting up SSL certificates and renewal

Gitops, Git branching, PR review process. Rules, Hooks, etc.

JBoss Wildfly, Apache, Nginx, Redis and Postgres

Trainees are expected to have laptops/ desktops configured as mentioned below with all the software required to run the eDCR service application

Laptop for hands-on training with 16GB RAM and OS preferably Ubuntu

All developers need to have Git ids

Install VSCode/IntelliJ/Eclipse

Postman

Install LibreCAD

There are knowledge assets available on the Net for general items and eGov assets for DIGIT services. Here you can find references to each of the topics of importance. It is mandated the trainees do a self-study of all the software mentioned in the prerequisites using the reference materials shared.

Kubernetes has changed the way organizations deploy and run their applications, and it has created a significant shift in mindsets. While it has already gained a lot of popularity and more and more organizations are embracing the change, running Kubernetes in production requires care.

Although Kubernetes is open source and does it have its share of vulnerabilities, making the right architectural decision can prevent a disaster from happening.

You need to have a deep level of understanding of how Kubernetes works and how to enforce the best practices so that you can run a secure, highly available, production-ready Kubernetes cluster.

Although Kubernetes is a robust container orchestration platform, the sheer level of complexity with multiple moving parts overwhelms all administrators.

That is the reason why Kubernetes has a large attack surface, and, therefore, hardening of the cluster is an absolute must if you are to run Kubernetes in production.

There are a massive number of configurations in K8s, and while you can configure a few things correctly, the chances are that you might misconfigure a few things.

I will describe a few best practices that you can adopt if you are running Kubernetes in production. Let’s find out.

If you are running your Kubernetes cluster in the cloud, consider using a managed Kubernetes cluster such as or .

A managed cluster comes with some level of hardening already in place, and, therefore, there are fewer chances to misconfigure things. A managed cluster also makes upgrades easy, and sometimes automatic. It helps you manage your cluster with ease and provides monitoring and alerting out of the box.

Since Kubernetes is open source, vulnerabilities appear quickly and security patches are released regularly. You need to ensure that your cluster is up to date with the latest security patches and for that, add an upgrade schedule in your standard operating procedure.

Having a CI/CD pipeline that runs periodically for executing rolling updates for your cluster is a plus. You would not need to check for upgrades manually, and rolling updates would cause minimal disruption and downtime; also, there would be fewer chances to make mistakes.

That would make upgrades less of a pain. If you are using a managed Kubernetes cluster, your cloud provider can cover this aspect for you.

It goes without saying that you should patch and harden the operating system of your Kubernetes nodes. This would ensure that an attacker would have the least attack surface possible.

You should upgrade your OS regularly and ensure that it is up to date.

Kubernetes post version 1.6 has role-based access control (RBAC) enabled by default. Ensure that your cluster has this enabled.

You also need to ensure that legacy attribute-based access control (ABAC) is disabled. Enforcing RBAC gives you several advantages as you can now control who can access your cluster and ensure that the right people have the right set of permissions.

RBAC does not end with securing access to the cluster by Kubectl clients but also by pods running within the cluster, nodes, proxies, scheduler, and volume plugins.

Only provide the required access to service accounts and ensure that the API server authenticates and authorizes them every time they make a request.

Running your API server on plain HTTP in production is a terrible idea. It opens your cluster to a man in the middle attack and would open up multiple security holes.

Always use transport layer security (TLS) to ensure that communication between Kubectl clients and the API server is secure and encrypted.

Be aware of any non-TLS ports you expose for managing your cluster. Also ensure that internal clients such as pods running within the cluster, nodes, proxies, scheduler, and volume plugins use TLS to interact with the API server.

While it might be tempting to create all resources within your default namespace, it would give you tons of advantages if you use namespaces. Not only will it be able to segregate your resources in logical groups but it will also enable you to define security boundaries to resources in namespaces.

Namespaces logically behave as a separate cluster within Kubernetes. You might want to create namespaces based on teams, or based on the type of resources, projects, or customers depending on your use case.

After that, you can do clever stuff like defining resource quotas, limit ranges, user permissions, and RBAC on the namespace layer.

Avoid binding ClusterRoles to users and service accounts, instead provide them namespace roles so that users have access only to their namespace and do not unintentionally misconfigure someone else’s resources.

Cluster Role and Namespace Role Bindings

You can use Kubernetes network policies that work as firewalls within your cluster. That would ensure that an attacker who gained access to a pod (especially the ones exposed externally) would not be able to access other pods from it.

You can create Ingress and Egress rules to allow traffic from the desired source to the desired target and deny everything else.

Kubernetes Network Policy

Do not share this file within your team, instead, create a separate user account for every user and only provide the right accesses to them. Bear in mind that Kubernetes does not maintain an internal user directory, and therefore, you need to ensure that you have the right solution in place to create and manage your users.

Once you create the user, you can generate a private key and a certificate signing request for the user, and Kubernetes would sign and generate a CA cert for the user.

You can then securely share the CA certificate with the user. The user can then use the certificate within kubectl to authenticate with the API server securely.

Configuring User Accounts

You can provide granular access to user and service accounts with RBAC. Let us consider a typical organization where you can have multiple roles, such as:

Application developers — These need access only to a namespace and not the entire cluster. Ensure that you provide them with access only to deploy their applications and troubleshoot them only within their namespace. You might want application developers with access to spin only ClusterIP services and might wish to grant permissions only to network administrators to define ingresses for them.

Network administrators — You can provide network admins access to networking features such as ingresses, and privileges to spin up external services.

Cluster administrators — These are sysadmins whose main job is to administer the entire cluster. These are the only people that should have cluster-admin access and only the amount that is necessary for them to do their roles.

The above is not etched in stone, and you can have a different organization policy and different roles, but the only thing to keep in mind here is that you need to enforce the principle of least privilege.

That means that individuals and teams should have only the right amount of access they need to perform their job, nothing less and nothing more.

It does not stop with just issuing separate user accounts and using TLS to authenticate with the API server. It is an absolute must that you frequently rotate and issue credentials to your users.

Set up an automated system that periodically revokes the old TLS certificates and issues new ones to your user. That helps as you don’t want attackers to get hold of a TLS cert or a token and then make use of it indefinitely.

Imagine a scenario where an externally exposed web application is compromised, and someone has gained access to the pod. In that scenario, they would be able to access the secrets (such as private keys) and target the entire system.

The way to protect from this kind of attack is to have a sidecar container that stores the private key and responds to signing requests from the main container.

In case someone gets access to your login microservice, they would not be able to gain access to your private key, and therefore, it would not be a straightforward attack, giving you valuable time to protect yourself.

Partitioned Approach

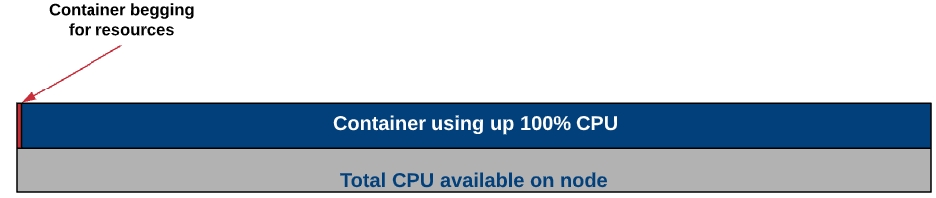

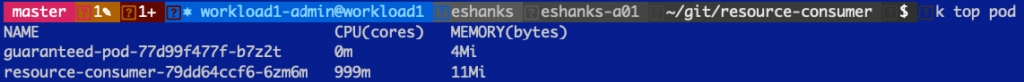

The last thing you would want as a cluster-admin is a situation where a poorly written microservice code that has a memory leak can take over a cluster node causing the Kubernetes cluster to crash. That is an extremely important and generally ignored area.

You can add a resource limit and requests on the pod level as a developer or the namespace as an administrator. You can use resource quotas to limit the amount of CPU, memory, or persistent disk a namespace can allocate.

It can also allow you to limit the number of pods, volumes, or services you can spin within a namespace. You can also make use of limit ranges that provide you with a minimum and maximum size of resources every unit of the cluster within the namespace can request.

That will limit users from seeking an unusually large amount of resources such as memory and CPU.

Specifying a default resource limit and request on a namespace level is generally a good idea as developers aren’t perfect. If they forget to specify a limit, then the default limit and requests would protect you from resource overrun.

The ETCD datastore is the primary source of data for your Kubernetes cluster. That is where all cluster information and the expected configuration is stored.

If someone gains access to your ETCD database, all security measures will go down the drain. They will have full control of your cluster, and they can do what they want by modifying state in your ETCD datastore.

You should always ensure that only the API server can communicate with the ETCD datastore and only through TLS using a secure mutual auth. You can put your ETCD nodes behind a firewall and block all traffic except the ones originating from the API server.

Do not use the master ETCD for any other purpose but for managing your Kubernetes cluster and do not provide any other component access to the ETCD cluster.

Enable encryption of your secret data at rest. That is extremely important so that if someone gets access to your ETCD cluster, they should not be able to view your secrets by just doing a hex dump of your secrets.

Containers run on nodes and therefore have some level of access to the host file system, however, the best way to reduce the attack surface is to architect your application in such a way that containers do not need to run as root.

Use pod security policies to restrict the pod to access HostPath volumes as that might result in getting access to the host filesystem. Administrators can use a restrictive pod policy so that anyone who gained access to one pod should not be able to access another pod from there.

Audit loggers are now a beta feature in Kubernetes, and I recommend you make use of it. That would help you troubleshoot and investigate what happened in case of an attack.

As a cluster-admin dealing with a security incident, the last thing you would want is that you are unaware of what exactly happened with your cluster and who has done what.

Remember that the above are just some general best practices and they are not exhaustive. You are free to adjust and make changes based on your use case and ways of working for your team.

This page explains why Kubernetes is required. It deep dives into the key benefits of using Kubernetes to run a large containerized platform like DIGIT in production environments.

Kubernetes project started in the year 2014 with . Kubernetes has now become the de facto standard for deploying containerized applications at scale in private, public and hybrid cloud environments. The largest public cloud platforms , , , and now provide managed services for Kubernetes. A few years back RedHat, Mesosphere, Pivotal, VMware, and Nutanix completely redesigned their implementation with Kubernetes and collaborated with the Kubernetes community for implementing the next-generation container platform with incorporated key features of Kubernetes such as container grouping, overlay networking, layer 4 routing, secrets, etc. Today many organizations & technology providers adopting Kubernetes at a rapid phase.

One of the fundamental design decisions which have been taken by this impeccable cluster manager is its ability to deploy existing applications that run on VMs without any changes to the application code. On a high level, any application that runs on VMs can be deployed on Kubernetes by simply containerizing its components. This is achieved by its core features; container grouping, container orchestration, overlay networking, container-to-container routing with layer 4 virtual IP-based routing system, service discovery, support for running daemons, deploying stateful application components, and most importantly the ability to extend the container orchestrator for supporting complex orchestration requirements.

On a very high-level Kubernetes provides a set of dynamically scalable hosts for running workloads using containers and uses a set of management hosts called masters for providing an API for managing the entire container infrastructure.

That's just a glimpse of what Kubernetes provides out of the box. The next few sections will go through its core features and explain how it can help applications to be deployed on it in no time.

A containerized application can be deployed on Kubernetes using a deployment definition by executing a simple CLI command as follows:

One of the key features of Kubernetes is its service discovery and internal routing model provided using SkyDNS and layer 4 virtual IP-based routing system. These features provide internal routing for application requests using services. A set of pods created via a replica set can be load balanced using a service within the cluster network. The services get connected to pods using selector labels. Each service will get assigned a unique IP address, a hostname derived from its name and route requests among the pods in a round-robin manner. The services will even provide IP-hash-based routing mechanism for applications which may require session affinity. A service can define a collection of ports and the properties defined for the given service will apply to all the ports in the same way. Therefore, in a scenario where session affinity is only needed for a given port and where all the other ports are required to use round-robin-based routing, multiple services may need to be used.

Kubernetes services have been implemented using a component called kube-proxy. A kube-proxy instance runs in each node and provides three proxy modes: Userspace, iptables and IPVS. The current default is iptables.

In the first proxy mode: userspace, kube-proxy itself will act as a proxy server and delegate requests accepted by an iptable rule to the backend pods. In this mode, kube-proxy will operate in the userspace and will add an additional hop to the message flow.

In the second proxy mode: iptables, the kube-proxy will create a collection of iptable rules for forwarding incoming requests from the clients directly to the ports of backend pods on the network layer without adding an additional hop in the middle. This proxy mode is much faster than the first mode because of operating in the kernel space and not adding an additional proxy server in the middle.

Kubernetes services can be exposed to external networks in two main ways. The first is using node ports by exposing dynamic ports on the nodes that forward traffic to the service ports. The second is using a load balancer configured via an ingress controller which can delegate requests to the services by connecting to the same overlay network. An ingress controller is a background process which may run in a container which listens to the Kubernetes API, and dynamically configure and reloads a given load balancer according to a given set of ingresses. An ingress defines the routing rules based on hostnames and context paths using services.

Once an application is deployed on Kubernetes using kubectl run command, it can be exposed to the external network via a load balancer as follows:

The above command will create a service of load balancer type and map it to the pods using the same selector label created when the pods were created. As a result, depending on how the Kubernetes cluster has been configured a load balancer service on the underlying infrastructure will get created for routing requests for the given pods either via the service or directly.

Applications that require persisting data on the filesystem may use volumes for mounting storage devices to ephemeral containers similar to how volumes are used with VMs. Kubernetes has properly designed this concept by loosely coupling physical storage devices with containers by introducing an intermediate resource called persistent volume claims (PVCs). A PVC defines the disk size, and disk type (ReadWriteOnce, ReadOnlyMany, ReadWriteMany) and dynamically links a storage device to a volume defined against a pod. The binding process can either be done in a static way using PVs or dynamically by using a persistent storage provider. In both approaches, a volume will get linked to a PV one-to-one and depending on the configuration given data will be preserved even if the pods get terminated. According to the disk type used multiple pods will be able to connect to the same disk and read/write.

Kubernetes provides a resource called DaemonSets for running a copy of a pod in each Kubernetes node as a daemon. Some of the use cases of DaemonSets are as follows:

A cluster storage daemon such as glusterd , ceph to be deployed on each node for providing persistence storage.

A log collection daemon such as fluentd or logstash to be run on every node for collecting container and Kubernetes component logs.

An ingress controller pod to be run on a collection of nodes for providing external routing.

One of the most difficult tasks of containerizing applications is the process of designing the deployment architecture of stateful distributed components. Stateless components can be easily containerized as they may not have a predefined startup sequence, clustering requirements, point-to-point TCP connections, unique network identifiers, graceful startup and termination requirements, etc. Systems such as databases, big data analysis systems, distributed key/value stores, and message brokers, may have complex distributed architectures that may require the above features. Kubernetes introduced StatefulSets resource for supporting such complex requirements.

On high-level StatefulSets are similar to ReplicaSets except that it provides the ability to handle the startup sequence of pods, and uniquely identify each pod for preserving its state while providing the following characteristics:

Stable, unique network identifiers.

Stable, persistent storage.

Ordered, graceful deployment and scaling.

Ordered, graceful deletion and termination.

Ordered, automated rolling updates

Containers generally use environment variables for parameterizing their runtime configurations. However, typical enterprise applications use a considerable amount of configuration files for providing static configurations required for a given deployment. Kubernetes provides a fabulous way of managing such configuration files using a simple resource called ConfigMaps without bundling them into container images. ConfigMaps can be created using directories, files or literal values using the following CLI command:

Once a ConfigMap is created, it can be mounted to a pod using a volume mount. With this loosely coupled architecture, configurations of an already running system can be updated seamlessly just by updating the relevant ConfigMap and executing a rolling update process which I will explain in one of the next sections. I might be important to note that currently ConfigMaps does not support nested folders, therefore if there are configuration files available in a nested directory structure of the application, a ConfigMap would need to be created for each directory level.

Similar to ConfigMaps Kubernetes provides another valuable resource called Secrets for managing sensitive information such as passwords, OAuth tokens, and ssh keys. Otherwise updating that information on an already running system might require rebuilding the container images.

A secret can be created for managing basic auth credentials using the following way:

Once a secret is created, it can be read by a pod either using environment variables or volume mounts. Similarly, any other type of sensitive information can be injected into pods using the same approach.

The above-animated image illustrates how application updates can be rolled out for an already running application using the blue/green deployment method without having to take a system downtime. This is another invaluable feature of Kubernetes which allows applications to seamlessly roll out security updates and backwards compatible changes without much effort. If the changes are not backwards compatible, a manual blue/green deployment might need to be executed using a separate deployment definition.

This approach allows a rollout to be executed for updating a container image using a simple CLI command:

Once a rollout is executed, the status of the rollout process can be checked as follows:

Using the same CLI command kubectl set image deployment an update can be rolled back to a previous state.

Figure 10: Kubernetes Pod Autoscaling Model

Kubernetes allows pods to be manually scaled either using ReplicaSets or Deployments. The following CLI command can be used for this purpose:

Figure 11: Helm and Kubeapps Hub

The Kubernetes community initiated a separate project for implementing a package manager for Kubernetes called Helm. This allows Kubernetes resources such as deployments, services, config maps, ingresses, etc to be templated and packaged using a resource called chart and allows them to be configured at the installation time using input parameters. More importantly, it allows existing charts to be reused when implementing installation packages using dependencies. Helm repositories can be hosted in public and private cloud environments for managing application charts. Helm provides a CLI for installing applications from a given Helm repository into a selected Kubernetes environment.

Kubernetes has been designed with over a decade of experience in running containerized applications at scale at Google. It has been already adopted by the largest public cloud vendors, and technology providers and is currently being embraced by most of the software vendors and enterprises as this article is written. It has even led to the inception of the Cloud Native Computing Foundation (CNCF) in the year 2015, was the first project to graduate under CNCF, and started streamlining the container ecosystem together with other container-related projects such as CNI, Containers, Envoy, Fluentd, gRPC, Jagger, Linkerd, Prometheus, RKT and Vitess. The key reasons for its popularity and to be endorsed at such a level might be its flawless design, collaborations with industry leaders, making it open-source, and always being open to ideas and contributions.

All content on this page by eGov Foundation is licensed under a Creative Commons Attribution 4.0 International License.

Install

Install

Install

Install 6

Application Server

All content on this page by is licensed under a .

By default, when you boot your cluster through , you get access to the kubernetes-admin config file which is the superuser for performing all activities within your cluster.

A bootstrap token, for example, needs to be revoked as soon as you finish with your activity. You can also make use of a credential management system such as which can issue you with credentials when you need them and revoke them when you finish with your work.

All content on this page by is licensed under a .

All content on this page by is licensed under a .

The above figure illustrates the high-level application deployment model on Kubernetes. It uses a resource called for orchestrating containers. A ReplicaSet can be considered as a YAML or a JSON-based metadata file which defines the container images, ports, the number of replicas, activation health checks, liveness health checks, environment variables, volume mounts, security rules, etc required for creating and managing the containers. Containers are always created on Kubernetes as groups called which is again a Kubernetes metadata definition or a resource. Each pod allows sharing of the file system, network interfaces, operating system users, etc among the containers using Linux namespaces, cgroups, and other kernel features. The ReplicaSets can be managed by another high-level resource called for providing features for rolling out updates and handling their rollbacks.

The third proxy mode was which is much similar to the second proxy mode and it makes use of an IPVS-based virtual server for routing requests without using iptable rules. IPVS is a transport layer load-balancing feature which is available in the Linux kernel based on Netfilter and provides a collection of load-balancing algorithms. The main reason for using IPVS over iptables is the performance overhead of syncing proxy rules when using iptables. When thousands of services are created, updating iptable rules takes a considerable amount of time compared to a few milliseconds with IPVS. Moreover, IPVS uses a hash table for looking up the proxy rules over sequential scans with iptables.

Disks that support ReadWriteOnce will only be able to connect to a single pod and will not be able to share among multiple pods at the same time. However, disks that support ReadOnlyMany will be able to share among multiple pods at the same time in read-only mode. In contrast, as the name implies disks with ReadWriteMany support can be connected to multiple pods for sharing data in read-and-write mode. Kubernetes provides for supporting storage services available on public cloud platforms such as AWS EBS, GCE Persistent Disk, Azure File, Azure Disk and many other well-known storage systems such as NFS, Glusterfs, iSCSI, Cinder, etc.

A node monitoring daemon such as to be run on every node for monitoring the container hosts.

In the above, stable refers to preserving the network identifiers and persistent storage across pod rescheduling. Unique network identifiers are provided by using headless services as shown in the above figure. Kubernetes has provided examples of StatefulSets for deploying , and in a distributed manner.

In addition to ReplicaSets and StatefulSets Kubernetes provides two additional controllers for running workloads in the background called and . The difference between Jobs and CronJobs is that Jobs executes once and terminates whereas CronJobs get executed periodically by a given time interval similar to standard Linux cron jobs.

Deploying databases on container platforms for production usage would be a slightly more difficult task than deploying applications due to their requirements for clustering, point-to-point connections, replication, shading, managing backups, etc. As mentioned previously StatefulSets has been designed specifically for supporting such complex requirements and there are a couple of options for running , and clusters on Kubernetes today. YouTube’s database clustering system which is now a CNCF project would be a great option for running MySQL at scale on Kubernetes with shading. By saying that it would be better to note that those options are still in very early stages and if an existing production-grade database system is available on the given infrastructure such as RDS on AWS, Cloud SQL on GCP, or on-premise database cluster it might be better to choose one of those options considering the installation complexity and maintenance overhead.

As shown in the above figure this functionality can be extended by adding another resource called against a deployment for dynamically scaling the pods based on their actual resource usage. The HPA will monitor the resource usage of each pod via the resource metrics API and inform the deployment to change the replica count of the ReplicaSet accordingly. Kubernetes uses an upscale delay and a downscale delay for avoiding thrashing which could occur due to frequent resource usage fluctuations in some situations. Currently, HPA only provides support for scaling based on CPU usage. If needed custom metrics can also be plugged in via the depending on the nature of the application.

A wide range of stable Helm charts for well-known software applications can be found in it’s and also in the central Helm server: .

[1] What is Kubernetes:

[2] Borg, Omega and Kubernetes:

[3] Kubernetes Components:

[4] Kubernetes Services:

[5] IPVS (IP Virtual Server)

[6] Introduction of IPVS Proxy Mode:

[7] Kubernetes Persistent Volumes:

[8] Kubernetes Configuration Best Practices:

[9] Customer Resources & Custom Controllers:

[10] Understanding Vitess:

[11] Skaffold, CI/CD for Kubernetes:

[12] Kaniko, Build Container Images in Kubernetes:

[13] Apache Spark 2.3 with Native Kubernetes Support

[14] Deploying Apache Kafka using StatefulSets:

[15] Deploying Apache Zookeeper using StatefulSets:

All content on this page by is licensed under a .

Program Execution Teams

Team Size

Roles/Actors

Proposed Composition

Timelines

Location

Program Management

2

Program Leader Program Manager

State / Deputation (External Consultants can be onboarded here if required)

Full-time

Central

Domain Experts

1-2

Domain experts for mCollect and Trade License

Senior Resources from ULBs / State Govt Depts. who can help in

interpretation of rules

propose reforms as required

From initiation till requirement finalisation/rollout

Central

Coordination + Execution Team

1 per ULB

Nodal Officers per ULB

ULB Staff (Tax Inspectors / Tax Suprintendants / Revenue Officers etc)

Full-time

Local

Technology Implementation Team

6-8

1 Program Manager 1 Sr. Developer 1 Jr. Developer 1 Tester 1 Data Migration Specialist / DBA 1 DevOps Lead 1 Business Analyst 1 Content / Documentation Expert

Outsourced

From initiation to rollout

Central

Data Preparation and Coordination (MIS)

1-2

MIS / Data / Cross-functional

Outsourced / Contract

Full-time

Central

Capacity Building / IEC

2-4

Content developers and Trainers / Process Experts

Outsourced / Contract

For 6-12 months post rollout

Central

Monitoring

4-6

Nodal Officers one per 2/3 Districts

State / Deputation

For 3-6 months post rollout

Semi-local

Help Desk and Support

4-5

Help Desk Support Analyst

Outsourced

For 12 months post rollout / as per contract

Central

Title

Responsibilities

Qualifications

Program Manager

Day to day project ownership/management and coordination within the project team as well as with State PMU

MBA / Relevant

Required 10+ years of Project/Program management experience implementing Tally / ERP Systems in large government deployments with extensive understanding of finance and accounting systems

Tech Lead / Sr. Developer

Decides all technical aspects/solutioning for the project in coordination with project plan and aligns resources to achieve project milestones

B.Tech / M.Tech / MCA

Required 8+ years of technology/solutioning experience. Preferred experience in deploying and maintaining large integrated platforms/systems

Jr. Developer / Support Engineer

Takes leadership with respect to complex technical solutions

B.Tech / M.Tech / MCA

Required 5+ years IT experience with skills such as Java, Core Java, PostgreSQL, GIT, Linux, Kibana, Elasticsearch, JIRA - Incident management, ReactJS, SpringBoot, Microservices, NodeJS

Tester / QA

Tests feature developed for completeness and accuracy with respect to defined business requirements

B.Tech / M.Tech / MCA

Required 5+ years IT experience with skills such as users stories and/use cases/requirements, execute all levels of testing (System, Integration, and Regression) JIRA - Incident management

Data Migration Specialist

Works with data collection teams to collect, clean and migrate legacy data into the platform

Candidate with strong exposure to data migration and postgreSQL with 5+ years of experience in migrating data between disparate systems. Hands-on experience with MS Excel and Macros.

DevOps Lead

In the case of the cloud: Owns all deployments and configurations needed for running the platform in the cloud

In the case of SDC: Works with SDC teams on deployments and configurations needed for running the platform in SDC

5+ years of overall experience Strong hands-on Linux experience (RHEL/CentOS, Debian/Ubuntu, Core OS) Strong hands-on Experience in managing AWS/Azure cloud instance Strong scripting skills (Bash, Python, Perl) with Automation. Strong hands-on in Git/Github, Maven, DSN/Networking Fundamentals. Strong knowledge of CI/CD Jenkins continuous integration tool Good knowledge of infrastructure automation tools (Ansible, Terraform) Good hands-on experience with Docker containers including container management platforms like Kubernetes Strong hands-on in Web Servers (Apache/NGINX) and Application Servers (JBoss/Tomcat/Spring boot)

Business Analyst

Works with domain experts to understand business and functional requirements and converts them into workable features

Tests feature developed for completeness and accuracy with respect to defined business requirements

BE / Masters / MBA

5+ years of experience in: Creating a detailed business analysis, outlining problems, opportunities and solutions for a business like Planning and monitoring, Variance Analysis, Reporting, Defining business requirements and reporting them back to stakeholders

Content / Documentation Expert

Works on documentation and preparation of project-specific artefacts

Good experience in creating and maintaining documentation (multi-lingual) for government programs. Ability to write clearly in a user-friendly manner. Proficiency in MS Office tools

Data Preparation and Coordination (MIS)

Works with ULB teams to gather data necessary for the deployment of the platform and guides them in operating the platform

BCom / MCom / Accounting Background

1+ year of experience along with a good understanding of MS Excel and Macros and good typing skills

Capacity Building / IEC Personnel

Works with ULB teams on-site on creating content and delivering training

BCom / MCom / Accounting Background

Required demonstrated experience in building the capacity of government personnel to influence change;

Proficiency with IEC content creation/delivery, theories, methods and technology in the capacity-building field, especially in supporting multi-stakeholder processes

Help Desk Support Team

The first line of support for ULB teams to answer all calls which arrive with respect to the platform

BCom / MCom / Accounting Background

Knowledge of managing helpdesk, fluent in the local language, good typing skills. Strong process and application knowledge

National Informatica Cloud

Details coming soon...

Git

Do you have a Git account?

Do you know how to clone a repository, pull updates, push updates?

Do you know how to give a pull request and merge the pull request?

Postgres

How to create database and set up privileges?

How to add index on table?

How to use aggregation functions in psql?

Postman

Call a REST API from Postman with proper payload and show the response

Setup any service locally(MDMS or user service has least dependencies) and check the API’s using postman

REST APIs

What are the principles to be followed when making a REST API?

When to use POST and GET?

How to define the request and response parameters?

JSON

How to write filters to extract specific data using jsonPaths?

YAML

How to read an API contract using swagger?

Maven

What is POM?

What is the purpose of maven clean install and how to do it?

What is the difference between version and SNAPSHOT?

eDCR Approach Guide

How to configuring and customizing the eDCR engine as per the state/city rules and regulations.

eDCR Service setup

Overall Flow of eDCr service, design and setup process

This page discusses the infrastructure requirements for DIGIT services. It also explains why DIGIT services are containerised and deployed on Kubernetes.

DIGIT Infra is abstracted to Kubernetes which is an open-source containers orchestration platform that helps in abstracting a variety of infra types that are being available across each state, like Physical, VMs, on-premises clouds(VMware, OpenStack, Nutanix, etc.), commercial clouds (Google, AWS, Azure, etc.), SDC and NIC into a standard infra type. Essentially it unifies various infra types into a standard and single type of infrastructure and thus DIGIT becomes multi-cloud supported, portable, extensible, high-performant and scalable containerized workloads and services. This facilitates both declarative configuration and automation. Kubernetes services, eco-system, support and tools are widely available.

Kubernetes as such is a set of components that designated jobs of scheduling, controlling, monitoring

3 or more machines running one of:

Ubuntu 16.04+

Debian 9

CentOS 7

RHEL 7

Container Linux (tested with 1576.4.0)

4 GB or more of RAM per machine (any less will leave little room for your apps)

2 CPUs or more

3 or more machines running one of:

Ubuntu 16.04+

Debian 9

CentOS 7

RHEL 7

Container Linux (tested with 1576.4.0)

2 GB or more of RAM per machine (any less will leave little room for your apps)

2 CPUs or more

Full network connectivity between all machines in the cluster (public or private network is fine)

Unique hostname, MAC address, and product_uuid for every node. Click here for more details.

Certain ports are open on your machines. See below for more details

Swap disabled. You MUST disable swap in order for the Kubelet to work properly

product_uuid Are Unique for Every NodeYou can get the MAC address of the network interfaces using the command ip link or ifconfig -a

The product_uuid can be checked by using the command sudo cat /sys/class/dmi/id/product_uuid

It is very likely that hardware devices will have unique addresses, although some virtual machines may have identical values. Kubernetes uses these values to uniquely identify the nodes in the cluster. If these values are not unique to each node, the installation process may fail.

If you have more than one network adapter, and your Kubernetes components are not reachable on the default route, we recommend you add IP route(s) so Kubernetes cluster addresses go via the appropriate adapter.

TCP

Inbound

6443*

Kubernetes API server

TCP

Inbound

2379-2380

etcd server client API

TCP

Inbound

10250

kubelet API

TCP

Inbound

10251

kube-scheduler

TCP

Inbound

10252

kube-controller-manager

TCP

Inbound

10255

Read-only kubelet API

TCP

Inbound

10250

kubelet API

TCP

Inbound

10255

Read-only kubelet API

TCP

Inbound

30000-32767

NodePort Services**

** Default port range for NodePort Services.

Any port numbers marked with * are overridable, so you will need to ensure any custom ports you provide are also open.

Systems

Specification

Spec/Count

Comment

User Accounts/VPN

Dev, UAT and Prod Envs

3

User Roles

Admin, Deploy, ReadOnly

3

OS

Any Linux (preferably Ubuntu/RHEL)

All

Kubernetes as a managed service or VMs to provision Kubernetes

Managed Kubernetes service with HA/DRS

(Or) VMs with 2 vCore, 4 GB RAM, 20 GB Disk

If no managed k8s

3 VMs/env

Dev - 3 VMs

UAT - 3VMs

Prod - 3VMs

Kubernetes worker nodes or VMs to provision Kube worker nodes.

VMs with 4 vCore, 16 GB RAM, 20 GB Disk / per env

3-5 VMs/env

DEV - 3VMs

UAT - 4VMs

PROD - 5VMs

Storage (NFS/iSCSI)

Storage with backup, snapshot, dynamic inc/dec

1 TB/env

Dev - 1000 GB

UAT - 800 GB

PROD - 1.5 TB

VM Instance IOPS

Max throughput 1750 MB/s

1750 MS/s

Storage IOPS

Max throughput 1000 MB/s

1000 MB/s

Internet Speed

Min 100 MB - 1000MB/Sec (dedicated bandwidth)

Public IP/NAT or LB

Internet-facing 1 public ip per env

3

3 Ips

Availability Region

VMs from the different region is preferable for the DRS/HA

at least 2 Regions

Private vLan

Per env all VMs should within private vLan

3

Gateways

NAT Gateway, Internet Gateway, Payment and SMS gateway, etc

1 per env

Firewall

Ability to configure Inbound, Outbound ports/rules

Managed DataBase

(or) VM Instance

Postgres 12 above Managed DB with backup, snapshot, logging.

(Or) 1 VM with 4 vCore, 16 GB RAM, 100 GB Disk per env.

per env

DEV - 1VMs

UAT - 1VMs

PROD - 2VMs

CI/CD server self-hosted (or) Managed DevOps

Self Hosted Jenkins: Master, Slave (VM 4vCore, 8 GB each)

(Or) Managed CI/CD: NIC DevOps or AWS CodeDeploy or Azure DevOps

2 VMs (Master, Slave)

Nexus Repo

Self-hosted Artifactory Repo (Or) NIC Nexus Artifactory

1

DockerRegistry

DockerHub (Or) SelfHosted private docker reg

1

Git/SCM

GitHub (Or) Any Source Control tool

1

DNS

main domain & ability to add more sub-domain

1

SSL Certificate

NIC managed (Or) SDC managed SSL certificate per URL

2 URLs per env

This article provides DIGIT Infra overview, Guidelines for Operational Excellence while DIGIT is deployed on SDC, NIC or any commercial clouds along with the recommendations and segregation of duties (SoD). It helps to plan the procurement and build the necessary capabilities to deploy and implement DIGIT.

In a shared control, the state program team/partners can consider these guidelines and must provide their own control implementation to the state’s cloud infrastructure and partners for a standard and smooth operational excellence.

DIGIT strongly recommends Site reliability engineering (SRE) principles as a key means to bridge between development and operations by applying a software engineering mindset to system and IT administration topics. In general, an SRE team is responsible for availability, latency, performance, efficiency, change management, monitoring, emergency response, and capacity planning.

Monitoring Tools Recommendations: Commercial clouds like AWS, Azure and GCP offer sophisticated monitoring solutions across various infra levels like CloudWatch and StackDriver, in the absence of such managed services to monitor we can look at various best practices and tools listed below which helps debugging and troubleshooting efficiently.

Segregation of duties and responsibilities.

SME and SPOCs for L1.5 support along with the SLAs defined.

Ticketing system to manage incidents, converge and collaborate on various operational issues.

Monitoring dashboards at various levels like Infrastructure, Network and applications.

Transparency of monitoring data and collaboration between teams.

Periodic remote sync up meetings, acceptance and attendance to the meeting.

Ability to see stakeholders availability of calendar time to schedule meetings.

Periodic (weekly, monthly) summary reports of the various infra, operations incident categories.

Communication channels and synchronization on a regular basis and also upon critical issues, changes, upgrades, releases etc.

While DIGIT is deployed at state cloud infrastructure, it is essential to identify and distinguish the responsibilities between Infrastructure, Operations and Implementation partners. Identify these teams and assign SPOC, define responsibilities and Incident management is followed to visualize, track issues and manage dependencies between teams. Essentially these are monitored through dashboards and alerts are sent to the stakeholders proactively. eGov team can provide consultation and training on the need basis depending any of the below categories.

State program team - Refers to the owner for the whole DIGIT implementation, application rollouts, capacity building. Responsible for identifying and synchronizing the operating mechanism between the below teams.

Implementation partner - Refers to the DIGIT Implementation, application performance monitoring for errors, logs scrutiny, TPS on peak load, distributed tracing, DB queries analisis, etc.

Operations team - this team could be an extension of the implementation team who is responsible for DIGIT deployments, configurations, CI/CD, change management, traffic monitoring and alerting, log monitoring and dashboard, application security, DB Backups, application uptime, etc.

**State IT/Cloud team -**Refers to state infra team for the Infra, network architecture, LAN network speed, internet speed, OS Licensing and upgrade, patch, compute, memory, disk, firewall, IOPS, security, access, SSL, DNS, data backups/recovery, snapshots, capacity monitoring dashboard.

Tools/Skills

Specification

Weightage (1-5)

Yes/No

System Administration

Linux Administration, troubleshooting, OS Installation, Package Management, Security Updates, Firewall configuration, Performance tuning, Recovery, Networking, Routing tables, etc

4

Containers/Dockers

Build/Push docker containers, tune and maintain containers, Startup scripts, Troubleshooting dockers containers.

2

Kubernetes

Setup kubernetes cluster on bare-metal and VMs using kubeadm/kubespary, terraform, etc. Strong understanding of various kubernetes components, configurations, kubectl commands, RBAC. Creating and attaching persistent volumes, log aggregation, deployments, networking, service discovery, Rolling updates. Scaling pods, deployments, worker nodes, node affinity, secrets, configMaps, etc..

3

Database Administration

Setup PostGres DB, Set up read replicas, Backup, Log, DB RBAC setup, SQL Queries

3

Docker Registry

Setup docker registry and manage

2

SCM/Git

Source Code management, branches, forking, tagging, Pull Requests, etc.

4

CI Setup

Jenkins Setup, Master-slave configuration, plugins, jenkinsfile, groovy scripting, Jenkins CI Jobs for Maven, Node application, deployment jobs, etc.

4

Artifact management

Code artifact management, versioning

1

Apache Tomcat

Web server setup, configuration, load balancing, sticky sessions, etc

2

WildFly JBoss

Application server setup, configuration, etc.

3

Spring Boot

Build and deploy spring boot applications

2

NodeJS

NPM Setup and build node applications

2

Scripting

Shell scripting, python scripting.

4

Log Management

Aggregating system, container logs, troubleshooting. Monitoring Dashboard for logs using prometheus, fluentd, Kibana, Grafana, etc.

3

WordPress

Multi-tenant portal setup and maintain

2

This document aims to put together all the items which will enable us to come up with a proper training plan for a partner team that will be working on the DIGIT platform.

Below listed are the technical skill sets that are required to work on the DIGIT stack. It is expected the team planning on attending training is well versed with the mentioned technologies before they attend eGov training sessions.

Open API Contract - Swagger2.0

YAML/JSON

Postman

Postgres

Java and REST APIS

Basics of Elasticsearch

Maven

Springboot

Kafka

Zuul

NodeJS, ReactJS

WordPress

PHP

Understanding of the microservice architecture.

Experience of AWS, Azure, GCP, NIC Cloud.

Strong working knowledge of Linux, command, VM Instances, networking, storage.

To create Kubernetes cluster on AWS, Azure, GCP on NIC Cloud.

Kubectl installation & commands (apply, get, edit, describe k8s objects)

Terraform for infra-as-code for cluster or VM provisioning.

Understanding of VM types, Linux OS types, LoadBalancer, VPC, Subnets, Security Groups, Firewall, Routing, DNS)

Experience setting up CI like Jenkins and create pipelines.

Deployment strategies - Rolling updates, Canary, Blue/Green.

Scripting - Shell, Groovy, Python and GoLang.

Experience in Baking Containers and Dockers.

Artifactory - Nexus, Verdaccio, DockerHub, etc.

Experience on Kubernetes ingress, setting up SSL certificates and renewal

Understanding on Zuul gateway

Gitops, Git branching, PR review process. Rules, Hooks, etc.

Experience in Helm, packaging and deploying.

JBoss Wildfly, Apache, Nginx, Redis and Postgres.

Trainees are expected to have laptops/ desktops configured as mentioned below with all the software required to run the DIGIT application

Laptop for hands-on training with 16GB RAM and OS preferably Ubuntu

All developers need to have Git ids

Install VSCode/IntelliJ/Eclipse

Install Git

Install JDK 8 update 112 or higher

Install maven v3.2.x

Install PostgreSQL v9.6

Install Elastic Search v2.4.x

Postman

There are knowledge assets available in the Net for general items and eGov assets for DIGIT services. Here you can find references to each of the topics of importance. It is mandated the trainees do a self-study of all the software mentioned in the prerequisites using the reference materials shared.

Git

Do you have a Git account?

Do you know how to clone a repository, pull updates, push updates?

Do you know how to give a pull request and merge the pull request?

Microservice Architecture

Do you know when to create a new service?

How to access other services?

ReactJS

How to create react app?

How to create a Stateful and Stateless Component?

How to use HOC as a wrapper?

Validations at form level using React.js and Redux

Postgres

How to create a database and set up privileges?

How to add an index on a table?

How to use aggregation functions in psql?

Postman

Call a REST API from Postman with proper payload and show the response

Setup any service locally(MDMS or user service has least dependencies) and check the API’s using postman

REST APIs

What are the principles to be followed when making a REST API?

When to use POST and GET?

How to define the request and response parameters?

Kafka

How to push messages on Kafka topic?

How does the consumer group work?

What are partitions?

Docker and Kubernetes

How to edit deployment configuration?

How to read logs?

How to go inside a Kubernetes pod?

How to create a docker file using a base image?

How to port-forward the pod to the local port?

JSON

How to write filters to extract specific data using jsonPaths?

YAML

How to read an API contract using swagger?

Zuul

What does Zuul do?

Maven

What is POM?

What is the purpose of maven clean install and how to do it?

What is the difference between the version and SNAPSHOT?

Springboot

How does Autowiring work in spring?

How to write a consumer/producer using spring Kafka?

How to make an API call to another service using restTemplate?

How to execute queries using JDBC Template?

Elastic search

How to write basic queries to fetch data from elastic search index?

Wordpress

DIGIT Architecture

What comes as part of core service, business service and municipal services?

How to calls APIs from one service in another service?

DIGIT Core Services

Which are the core services in the DIGIT framework?

DIGIT DevOps

DIGIT MDMS

How to read a master date from MDMS?

How to add new data in an existing Master?

Where is the MDMS data stored?

DIGIT UI Framework

How to add a new component to the framework?

How to use an existing component?

DSS

For provisioning Kubernetes clusters with the Azure cloud provider Kubermatic needs a service account with (at least) the Azure role Contributor. Please follow the following steps to create a matching service account.

Login to Azure with Azure CLI az.

This command will open in your default browser a window where you can authenticate. After you succefully logged in get your subscription ID.

Get your Tenant ID

create a new app with

Enter provider credentials using the values from step “Prepare Azure Environment” into Kubermatic Dashboard:

Client ID: Take the value of appId

Client Secret: Take the value of password

Tenant ID: your tenant ID

Subscription ID: your subscription ID

The Kubernetes vSphere driver contains bugs related to detaching volumes from offline nodes. See the Volume detach bug section for more details.

When creating worker nodes for a user cluster, the user can specify an existing image. Defaults may be set in the datacenters.yaml.

Supported operating systems

Go into the VSphere WebUI, select your data centre, right-click onto it and choose “Deploy OVF Template”

Fill in the “URL” field with the appropriate URL

Click through the dialogue until “Select storage”

Select the same storage you want to use for your machines

Select the same network you want to use for your machines

Leave everything in the “Customize Template” and “Ready to complete” dialogue as it is

Wait until the VM got fully imported and the “Snapshots” => “Create Snapshot” button is not greyed out anymore.

The template VM must have the disk.enable UUID flag set to 1, this can be done using the govc tool with the following command:

Convert it to vmdk: qemu-img convert -f qcow2 -O vmdk CentOS-7-x86_64-GenericCloud.qcow2 CentOS-7-x86_64-GenericCloud.vmdk

Upload it to a Datastore of your vSphere installation

Create a new virtual machine that uses the uploaded vmdk as rootdisk.

Modifications like Network, disk size, etc. must be done in the ova template before creating a worker node from it. If user clusters have dedicated networks, all user clusters, therefore, need a custom template.

During the creation of a user cluster Kubermatic creates a dedicated VM folder in the root path on the Datastore (Defined in the datacenters.yaml). That folder will contain all worker nodes of a user cluster.

Kubernetes needs to talk to the vSphere to enable Storage inside the cluster. For this, kubernetes needs a config called cloud-config. This config contains all details to connect to a vCenter installation, including credentials.

As this Config must also be deployed onto each worker node of a user cluster, its recommended to have individual credentials for each user cluster.

The VSphere user must have the following permissions on the correct resources

Role k8c-storage-vmfolder-propagate

Granted at VM Folder and Template Folder, propagated

Permissions

Virtual machine

Change Configuration

Add existing disk

Add new disk

Add or remove the device

Remove disk

Folder

Create folder

Delete folder

Role k8c-storage-datastore-propagate

Granted at Datastore, propagated

Permissions

Datastore

Allocate space

Low-level file operations

Role Read-only (predefined)

Granted at …, not propagated

Datacenter

Role k8c-user-vcenter

Granted at vcentre level, not propagated

Needed to customize VM during provisioning

Permissions

VirtualMachine

Provisioning

Modify customization specification

Read customization specifications

Role k8c-user-datacenter

Granted at datacentre level, not propagated

Needed for cloning the template VM (obviously this is not done in a folder at this time)

Permissions

Datastore

Allocate space

Browse datastore

Low-level file operations

Remove file

vApp

vApp application configuration

vApp instance configuration

Virtual Machine

Change CPU count

Memory

Settings

Inventory

Create from existing

Role k8c-user-cluster-propagate

Granted at the cluster level, propagated

Needed for upload of cloud-init.iso (Ubuntu and CentOS) or defining the Ignition config into Guestinfo (CoreOS)

Permissions

Host

Configuration

System Management

Local operations

Reconfigure virtual machine

Resource

Assign virtual machine to the resource pool

Migrate powered off the virtual machine

Migrate powered-on virtual machine

vApp

vApp application configuration

vApp instance configuration

Role k8s-network-attach

Granted for each network that should be used

Permissions

Network

Assign network

Role k8c-user-datastore-propagate

Granted at datastore/datastore cluster level, propagated

Permissions

Datastore

Allocate space

Browse datastore

Low-level file operations

Role k8c-user-folder-propagate

Granted at VM Folder and Template Folder level, propagated

Needed for managing the node VMs

Permissions

Folder

Create folder

Delete folder

Global

Set custom attribute

Virtual machine

Change Configuration

Edit Inventory

Guest operations

Interaction

Provisioning

Snapshot management

The described permissions have been tested with vSphere 6.7 and might be different for other vSphere versions.

After a node is powered-off, the Kubernetes vSphere driver doesn’t detach disks associated with PVCs mounted on that node. This makes it impossible to reschedule pods using these PVCs until the disks are manually detached in vCenter.

Upstream Kubernetes has been working on the issue for a long time now and tracking it under the following tickets:

Looking at these requirements for a DevOps engineer, it is pretty clear that one should have a variety of skills to manage DIGIT DevOps.

Anyone involved in hiring DevOps engineers will realize that it is hard to find prospective candidates who have all the skills listed in this section.

Ultimately, the skill set needed for an incoming DevOps engineer would depend on the current and short-term focus of the operations team. A brand new team that is rolling out a new software service would require someone with good experience in infrastructure provisioning, deployment automation, and monitoring. A team that supports a stable product might require the service of an expert who could migrate home-grown automation projects to tools and processes around standard configuration management and continuous integration tools.

DevOps practice is a glue between engineering disciplines. An experienced DevOps engineer would end up working in a very broad swath of technology landscapes that overlaps with software development, system integration, and operations engineering.

An experienced DevOps engineer would be able to describe most of the technologies that is described in the following sections. This is a comprehensive list of DevOps skills for comparing one’s expertise and a reference template for acquiring new skills.

In theory, a template like this should be used only for assessing the current experience of a prospective hire. The needed skills can be picked up on the jobs that demand deep knowledge in certain areas. Therefore, the focus should be to hire smart engineers who have a track record of picking up new skills, rolling out innovative projects at work, and contributing to reputed open-source projects.

A DevOps engineer should have a good understanding of both classic (data centre-based) and cloud infrastructure components, even if the team has a dedicated infrastructure team.

This involves how real hardware (servers and storage devices) are racked, networked, and accessed from both the corporate network and the internet. It also involves the provisioning of shared storage to be used across multiple servers and the methods available for that, as well as infrastructure and methods for load balancing.

Hypervisors.

Virtual machines.

Object storage.

Running virtual machines on PC and Mac (Vagrant, VMWare, etc.).

Cloud infrastructure has to do with core cloud computing and storage components as they are implemented in one of the popular virtualization technologies (VMWare or OpenStack). It also involves the idea of elastic infrastructure and options available to implement it.

Network layers

Routers, domain controllers, etc.

Networks and subnets

IP address

VPN

DNS

Firewall

IP tables

Network access between applications (ACL)

Networking in the cloud (i.e., Amazon AWS)

Load balancing infrastructure and methods

Geographical load balancing

Understanding of CDN

Load balancing in the cloud

A DevOps engineer should have experience using specialized tools for implementing various DevOps processes. While Jenkins, Dockers, Kubernetes, Terraform, Ansible, and the like are known to most DevOps guys, other tools might be obscure or not very obvious (such as the importance of knowing one major monitoring tool in and out). Some tools like, source code control systems, are shared with development teams.

The list here has only examples of basic tools. An experienced DevOps engineer would have used some application or tool from all or most of these categories.

Expert-level knowledge of an SCM system such as Git or Subversion.

Knowledge of code branching best practices, such as Git-Flow.

Knowledge of the importance of checking in Ops code to the SCM system.

Experience using GitHub.

Experience using a major bug management system such as Bugzilla or Jira.

Ability to have a workflow related to the bug filing and resolution process.

Experience integrating SCM systems with the bug resolution process and using triggers or REST APIs.

Knowledge of Wiki basics.

Experience using MediaWiki, Confluence, etc.

Knowledge of why DevOps projects have to be documented.

Knowledge of how documents were organized on a Wiki-based system.

Experience building on Jenkins standalone, or dockerized.

Experience using Jenkins as a Continuous Integration (CI) platform.

CI/CD pipeline scripting using groovy

Experience with CI platform features such as:

Integration with SCM systems.

Secret management and SSH-based access management.

Scheduling and chaining of build jobs.

Source-code change based triggers.

Worker and slave nodes.

REST API support and Notification management.

Should know what artefacts are and why they have to be managed.

Experience using a standard artefacts management system such as Artifactory.

Experience caching third-party tools and dependencies in-house.

Should be able to explain configuration management.

Experience using any Configuration Management Database (CMDB) system.

Experience using open-source tools such as Cobbler for inventory management.

Ability to do both agent-less and agent-driven enforcement of configuration.

Experience using Ansible, Puppet, Chef, Cobbler, etc.

Knowledge of the workflow of released code getting into production.

Ability to push code to production with the use of SSH-based tools such as Ansible.

Ability to perform on-demand or Continuous Delivery (CD) of code from Jenkins.

Ability to perform agent-driven code pull to update the production environment.

Knowledge of deployment strategies, with or without an impact on the software service.

Knowledge of code deployment in the cloud (using auto-scaling groups, machine images, etc.).

Knowledge of all monitoring categories: system, platform, application, business, last-mile, log management, and meta-monitoring.

Status-based monitoring with Nagios.

Data-driven monitoring with Zabbix.

Experience with last-mile monitoring, as done by Pingdom or Catchpoint.

Experience doing log management with ELK.

Experience monitoring SaaS solutions (i.e., Datadog and Loggly).

To get an automation project up and running, a DevOps engineer builds new things such as configuration objects in an application and code snippets of full-blown programs. However, a major part of the work is glueing many things together at the system level on the given infrastructure. Such efforts are not different from traditional system integration work and, in my opinion, the ingenuity of an engineer at this level determines his or her real value on the team. It is easy to find cookbooks, recipes, and best practices for vendor-supported tools, but it would take experience working on diverse projects to gain the necessary skill set to implement robust integrations that have to work reliably in production.

Important system-level tools and techniques are listed here. The engineer should have knowledge about the following.

Users and groups on Linux.

Use of service accounts for automation.

Sudo commands, /etc/sudoers files, and passwordless access.

Using LDAP and AD for access management.

Remote access using SSH.

SSH keys and related topics.

SCP, SFTP, and related tools.

SSH key formats.

Managing access using configuration management tools.

Use of GPG for password encryption.

Tools for password management such as KeePass.

MD5, KMS for encryption/decryption.

Remote access with authentication from automation scripts.

Managing API keys.

Jenkins plugins for password management.

Basics of compilers such as node.js and Javac.

Make and Makefile, npm, Maven, Gradle, etc.

Code libraries in Node, Java, Python, React etc.

Build artefacts such as JAR, WAR and node modules.

Running builds from Jenkins.

Packaging files: ZIP, TAR, GZIP, etc.

Packaging for deployment: RPM, Debian, DNF, Zypper, etc.

Packaging for the cloud: AWS AMI, VMWare template, etc.

Use of Packer.

Docker and containers for microservices.

Use of artefacts repository: Distribution and release of builds; meeting build and deployment dependencies

Serving artefacts from a shared storage volume

Mounting locations from cloud storage services such as AWS S3

Artifactory as artefacts server

SCP, Rsync, FTP, and SSL counterparts

Via shared storage

File transfer with cloud storage services such as AWS S3

Code pushing using system-level file transfer tools.

Scripting using SSH libraries such as Paramiko.

Orchestrating code pushes using configuration management tools.

Use of crontab.

Running jobs in the background; use of Nohup.

Use of screen to launch long-running jobs.

Jenkins as a process manager.

Typical uses of the find, DF, DU, etc.

A comparison of popular distributions.

Checking OS release and system info.

Package management differences.

OS Internals and Commands

Typical uses of SED, AWK, GREP, TR, etc.

Scripting using Perl, Python.

Regular expressions.

Support for regular expressions in Perl and Python.

Sample usages and steps to install these tools:

NC

Netstat

Traceroute

VMStat

LSOF

Top

NSLookup

Ping

TCPDump

Dig

Sar

Uptime

IFConfig

Route

One of the attributes that helps differentiate a DevOps engineer from other members in the operations team, like sysadmins, DBAs, and operations support staff, is his or her ability to write code. The coding and scripting skill is just one of the tools in the DevOps toolbox, but it's a powerful one that a DevOps engineer would maintain as part of practising his or her trade.